Do you know the Six Principles of Responsible AI?

What about the Four Pillars of Responsible AI?

Ok, how about the Seven Cs of AI? (Quite like that one – nice wordplay) The Seven Pillars of Trustworthy AI? The Five Pillars of Trustworthy AI? The Eight Dimensions of Responsible AI? The Five…

Well, you get the idea. This could go on almost without end. There is clearly nothing to add in coming up with a new inventory of pillars, columns, pediments or chimneys of responsible AI at this point. To go further, we must become

M E T A.

And no, not the nine-figure hiring bonus Meta, sadly. My letters have still not been responded to…

As any fan of cryptic crossword clues will tell you (and, while I’m not one, the Youtube algorithm has recently decided I ought to be), meta is just metal without the “l”, or to put it another way:

Met the author of An Inconvenient Truth but lost a French fashion magazine

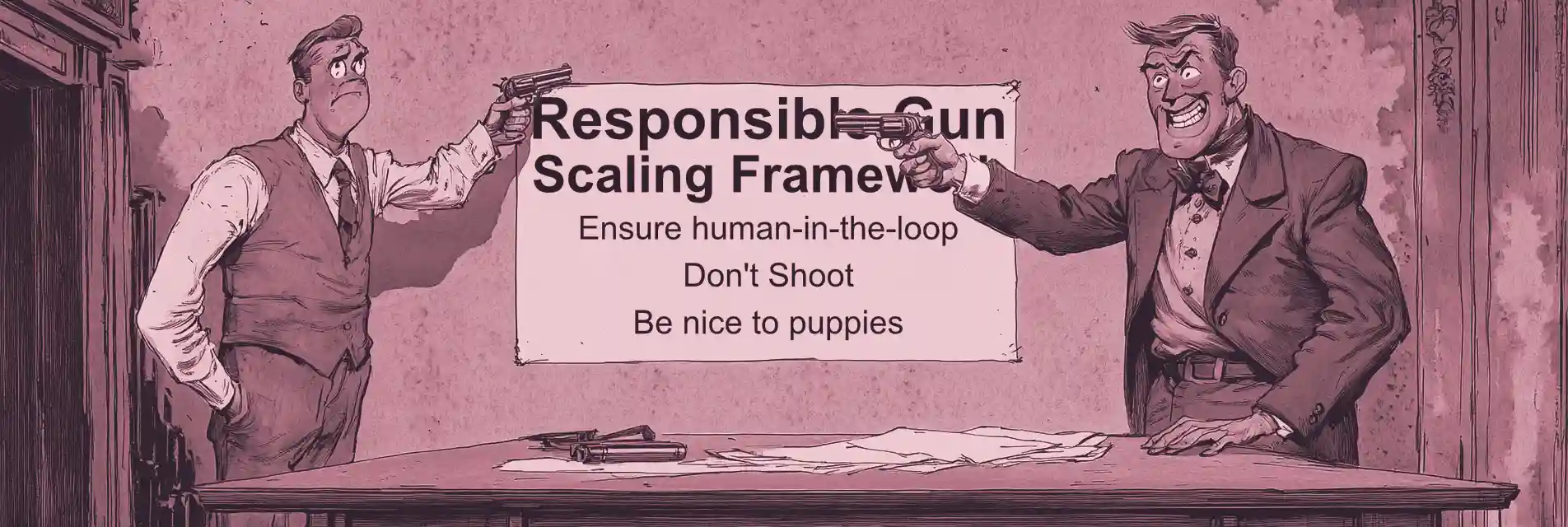

Frankly, there appear to be as many responsible AI frameworks as there are organisations trying to establish a reputation within responsible AI, which is to say, many. One is reminded of the story about a bunch of blind men trying to describe what an elephant looks like through touching parts of it. The man touching the trunk will think an elephant looks like a snake, and while at least one of the others may agree with him, the chap touching its ears may think he’s got hold of some sort of pterodactyl.

What I’m saying is, wouldn’t it be smashing if someone were to drop a load of these responsible AI frameworks into a pot and then generate a synthesis of the whole lot, a kind of meta-framework derived from the comonalities of all of them?

Ok then…

I begin with the following 21 frameworks for responsible AI. These are not all precisely equivalent to each other; some are blog posts on the websites of consultancy firms, others are summaries of the policy position of countries or international organisations. I haven’t set inclusion criteria for these. What Google returned is what we’ve got.

In no particular order:

- Cisco Responsible AI Framework (2024)

- Atlassian Responsible AI Principles (2024)

- SAP What is Responsible AI? (2024)

- Data Camp The 4 Pillars of Responsible AI

- Vocal Media’s 7 Cs of AI (2023)

- European Commission High Level Expert Group on AI Ethics Guidelines (2019)

- Tata Consultancy Services Pillars of Trustworthy AI

- AWS Dimensions of Responsible AI for Amazon Bedrock Applications (2024)

- AWS Responsible use of Machine Learning (2023)

- Blue Prism Principles for Responsible AI

- Microsoft Azure Six Principles for Responsible AI (2024)

- Ascendient Learning Stages of the Responsible AI Lifecycle (2025)

- US Department of Defense Five Principles of AI (2020)

- KPMG Trusted AI Framework (2023)

- Bank Director Elements of Responsible AI (2024)

- Hiroshima Process International Code of Conduct for Organizations Developing Advanced AI Systems (2023)

- Singapore Management University Seven Pillars for the future of Artificial Intelligence (2023)

- McKinsey Responsible AI Principles

- IBM What is Responsible AI?

- UK Responsible Artificial Intelligence Institute

- Kolena Seven Pillars of Responsible AI

These are all collectively run through ChatGPT (as it has a large context window, so can ingest a long document).

I ask about the core themes. Are they similar, or are they divergent, representing the variability in these documents?

Well, it appears they do in fact quite consistently converge in their themes. These can be summarised thusly:

- Bias. AI should not perpetuate or introduce unfair discrimination. Most of the frameworks discuss methods to address this issue.

- Transparency. AI should be understandable, and its responses traceable.

- Governance. Responsibility for aspects of AI should be assigned properly to people or committees. There should be governance mechanisms in place to control decision-making.

- Data Security. Data used by and generated by AI should be secure.

- System Robustness. AI should be reliable even under adversarial and unexpected circumstances. Strong testing, training and monitoring are important.

- Human-Centric. Human-in-the-loop design, ethical review boards etc.

I requested information on the degree to which these resources differ from one another.

While many core themes are repeated, the technical depth, writing style and tone differ, as one would expect given that these are for varied audiences and by varied organisations.

Some are corporate and summarise a corporate approach at a fairly high level. Some are policy or advocacy documents more focused on societal impact. Some are educational or marketing-driven, and others are more technical, aimed at developers.

Fundamentally, despite their differing formats and purposes, they coalesce around the shared principles of fairness, transparency, accountability, privacy and robustness. However, one wonders if that actually belies a lack of insight rather than the inverse. After all, such principles apply everywhere – pretty much any organisation from charity to government, think tank to private corporation, to any software, to any public process. One might even go so far as to say that these principles are just synonyms for the word “responsible”.

From this, I might posit that it is in fact not very useful to ask “What is the good thing?” Everyone knows. It’s intuitive, universal, and to the extent it can be summarised, vague enough to be useless. Frameworks like this are useful only where they define goals that are useful and also motivate coordinated behaviour towards those goals. So we must ask, not what is the good thing, but “What is the cause of the bad thing?”

What is the perverse incentive?

What is the source of corruption?

How do you resolve the trade-off?

What is the reward for short-termism?

How do principles get eroded slowly?

Dario Amodei of Anthropic, one of the most visibly responsible AI-focused frontier labs out there, has commented on the importance of creating a “race to the top” before, ensuring that the way the market is arranged rewards long-termism and responsibility over the alternative.

It is of course all very well to say that, but there is always pressure to water it down. This can be seen again and again in the tech sector, over decades. Aspirational mission statements are very easy to write, but if the causes of departure from them are not actually solved, then departing from them is all but guaranteed.

The reason is this: decision-makers will almost always favour ethics as a standard, but when pushed to make a real choice between a concrete and immediate profit in exchange for an incremental and externalised cost, they’ll almost always choose the former.

And if they don’t, roll the dice again. And keep rolling it forever. If you can roll a dice forever and never land on a six, congratulations, you’ve cracked responsible AI.

What matters is not identifying what we should do, but why we don’t do it.